Harnessing AI for Compensation: Why the Best Agents Are the Ones You Control

Learn more about the following beqom products

The latest generation of AI models — Claude Opus 4.6, GPT 5.4, and their successors — can build entire applications from a conversation. Describe what you want, and within minutes you have working software. For anyone building technology, this feels like a genuine inflection point. And it is.

But if you work in compensation, that power comes with a question that matters more than any benchmark or capability score: who's actually in control of what the AI produces?

Agents can handle complexity. They can't read your mind.

Let's start by giving AI its due. Modern agents are remarkably capable. They can navigate complex data models, chain together multi-step workflows, generate code, and reason through ambiguous problems. The idea that AI isn't ready for serious enterprise work is already outdated.

But capability isn't the issue. Intent is.

Compensation is one of those domains where what you want is extraordinarily hard to express. When a compensation leader says "I need a fair merit increase matrix," that single sentence carries an enormous amount of implicit context:

- Budget constraints

- Performance rating distributions

- Geographic pay differentials

- Internal equity targets

- Regulatory thresholds

These are in addition to dozens of org-specific rules that no one has ever fully written down. Comp lives in people's heads, in institutional knowledge, in judgment calls made over years of experience.

No agent — no matter how sophisticated — can infer all of that from a prompt. Not because the technology is limited, but because the intent is inherently human. This is why you need a mechanism for the human to be precise about what they actually mean. And that mechanism, in compensation, is the formula.

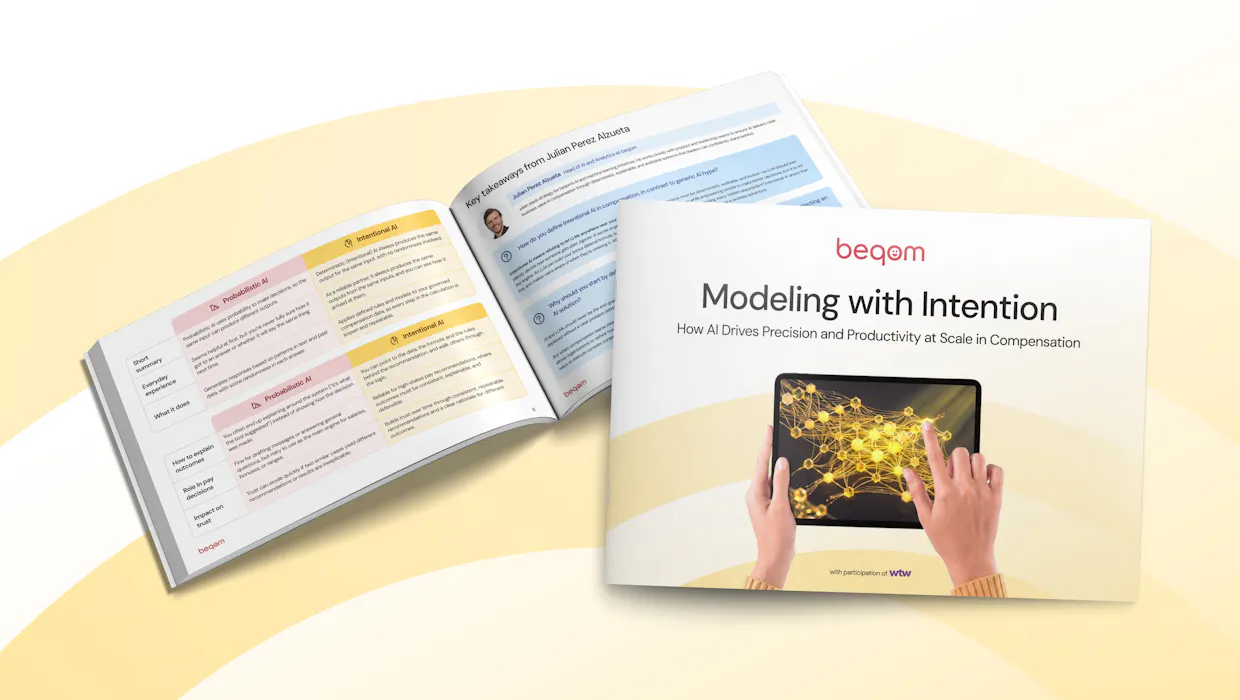

A formula is the contract between human intent and machine execution. It's where a compensation professional says: "This is exactly what I mean by this rule." The agent can help you get there fast — suggesting structures, mapping data fields, generating candidates — but the formula is where the human signs off. That's not a limitation of AI. That's the intelligent use of it.

The touchpoint problem: reviewing everything or reviewing nothing

Here's a practical reality that anyone who has worked with AI agents knows: they produce a lot of output. An agent building a compensation application might generate dozens of data mappings, business rules, eligibility checks, and calculation steps in a single run. The sheer volume creates a new problem — without clear touchpoints, you're either reviewing everything line by line (defeating the purpose of using AI) or reviewing nothing (which is reckless with pay data).

Formulas solve this. They're the natural touchpoint between the human and the agent because they're readable, testable, and auditable. A compensation analyst can look at a formula and immediately assess whether it reflects what they intended. They can test it against sample data. They can trace the logic. They can say "yes, that captures my rule" or "no, you've misunderstood the eligibility criteria."

Think of it like building a spreadsheet. Nobody questions the power of Excel. But the value of Excel isn't that it makes decisions for you — it's that you define the formula, and the tool executes it flawlessly across thousands of rows. You control the logic. The tool handles the scale.

AI agents should work the same way for compensation. The agent accelerates everything around the formula — understanding data structures, suggesting approaches, wiring up integrations. But the formula itself is where the human has a real conversation with the system, a touchpoint where intent becomes explicit.

Without these touchpoints, you're trusting a probabilistic system to produce deterministic outcomes. And when it gets something wrong — which it will — the mistake is buried in a chain of reasoning that's nearly impossible to audit after the fact.

That's the real danger: not AI making errors, but that the errors are invisible.

A small team of focused agents beats one genius agent

There's a tempting vision in the AI world right now: the all-powerful agent that can do everything. One agent that replaces entire functions, handles every workflow, makes every decision. It's a compelling pitch, but in practice, it fails.

What works — and what we've learned building AI-powered compensation tools — is the opposite. You want a small team of focused agents, each with a narrow scope and a clear job. One agent that understands your data model. Another that generates formula syntax. Another that validates against business rules. Each one does its job well precisely because it doesn't try to do everything else.

This mirrors how effective human teams work. You don't ask one person to be the data engineer, the compensation analyst, the compliance officer, and the formula auditor. You build a team of specialists who collaborate. AI agents work the same way. When an agent has a narrow mandate, it doesn't overthink. It doesn't hallucinate edge cases. It doesn't confuse the context from one task with another. It stays in its lane and delivers reliably.

The moment you try to build a single agent that handles the entire compensation lifecycle — from data ingestion to rule creation to calculation to reporting — you get an agent that's mediocre at everything and reliable at nothing. It loses track of instructions, introduces subtle errors, and creates a debugging nightmare that's worse than the manual process it was supposed to replace.

Narrow scope isn't a compromise. It's an architectural decision. And it's the one that works.

Speed without control isn't an advantage — it's a liability

The conversation about AI in HR too often falls into one of two camps: uncritical enthusiasm ("AI will transform everything!") or reflexive caution ("AI is too risky for compensation"). Both miss the point.

The real question isn't whether to use AI. It's about how to direct its power toward outcomes you can stand behind. Speed is valuable — being able to generate a complex incentive formula in minutes instead of days is a genuine competitive advantage. But speed without control isn't an advantage. It's a liability.

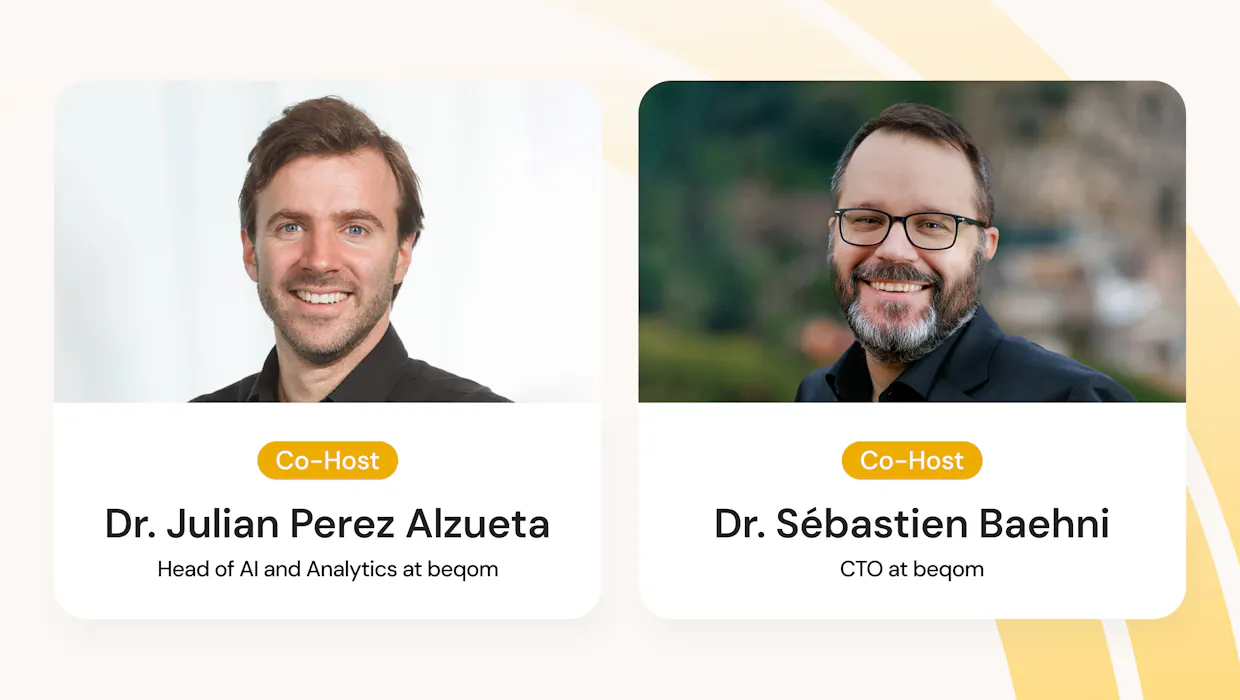

This is what we mean by Intentional AI at beqom. Not AI that's cautious or limited, but AI that's directed — where the power is harnessed toward a specific, human-defined purpose.

- Explainable — every formula the agent generates can be inspected, understood, and audited. No black boxes, no hidden reasoning chains.

- Collaborative — the agent works alongside compensation professionals as a fast, capable partner. It proposes. The human decides. The system executes deterministically.

- Controllable — you own the data, you own the formulas, you own the outcomes. The agent operates within boundaries you define, not boundaries it invents.

What we're building

At beqom, we're building the platform that makes this real. A place where AI agents help compensation teams create intelligence across the entire compensation workflow – from data exploration and pay analysis to rule design and scenario modeling.

One of the core use cases for Comp Professional is building formulas on top of their data – fast, efficiently, and in a way that is clear and explainable to the people that are accountable for pay decisions. But formulas are just the beginning. The same principles of control and explainability extend to every place where the agents interact with compensation logic, and apply to the creation of the agents themselves.

The agent handles the heavy lifting: navigating complex data models, suggesting formula structures, mapping fields, generating candidates. But every formula passes through human hands before it touches real compensation data. The touchpoints are built into the workflow by design, not bolted on as an afterthought.

Because we believe the companies that will get AI right in HR aren't the ones that hand everything to the machine. They're the ones that know exactly where to draw the line — and build systems that make that line easy to hold.

The future of AI in compensation isn't about replacing human judgment. It's about giving human judgment the fastest, most powerful tools it's ever had, while making sure it remains exactly that: human.

Ready to put AI to work without losing control?

Don't let your compensation logic get lost in a black box. Explore how beqom uses focused AI agents to accelerate your workflow while keeping your data, formulas, and outcomes entirely in your control.